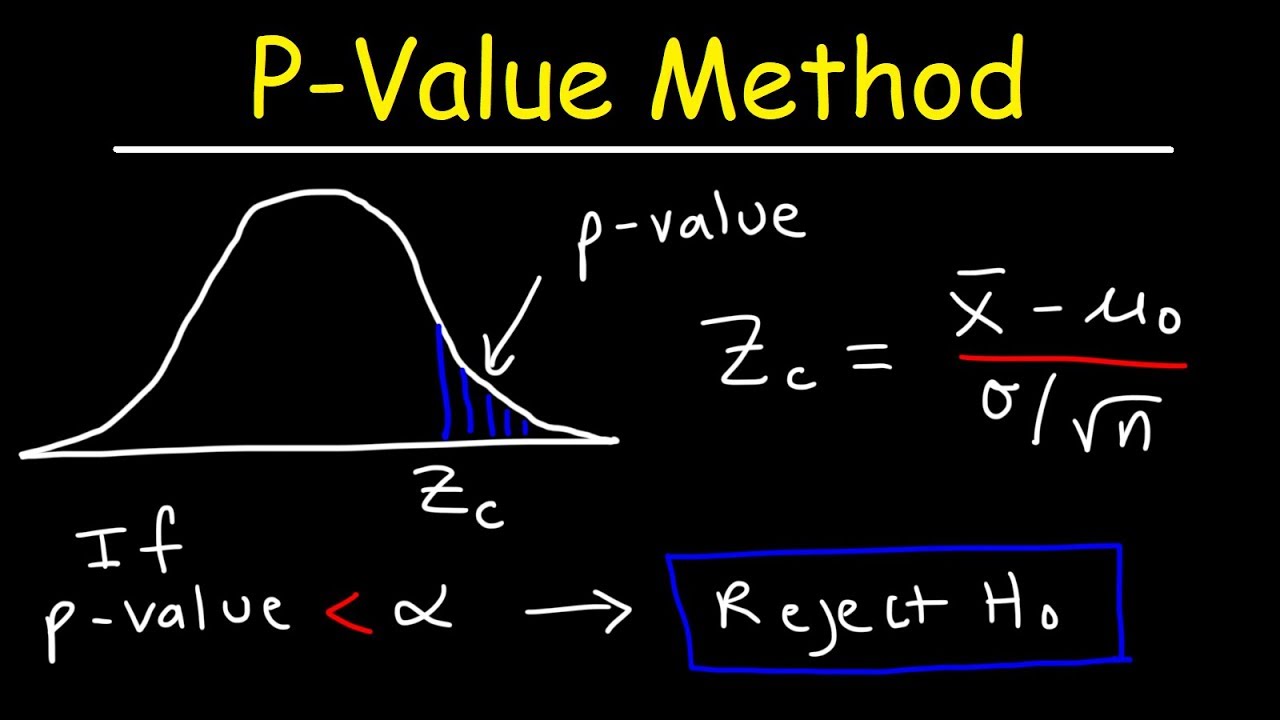

If the P-value is greater than \(\alpha\), do not reject the null hypothesis. If the P-value is less than (or equal to) \(\alpha\), reject the null hypothesis in favor of the alternative hypothesis.

Set the significance level, \(\alpha\), the probability of making a Type I error to be small - 0.01, 0.05, or 0.10.Using the known distribution of the test statistic, calculate the P -value: "If the null hypothesis is true, what is the probability that we'd observe a more extreme test statistic in the direction of the alternative hypothesis than we did?" (Note how this question is equivalent to the question answered in criminal trials: "If the defendant is innocent, what is the chance that we'd observe such extreme criminal evidence?").Again, to conduct the hypothesis test for the population mean μ, we use the t-statistic \(t^*=\frac\) which follows a t-distribution with n - 1 degrees of freedom. Using the sample data and assuming the null hypothesis is true, calculate the value of the test statistic.Specify the null and alternative hypotheses.

Specifically, the four steps involved in using the P-value approach to conducting any hypothesis test are: And, if the P-value is greater than \(\alpha\), then the null hypothesis is not rejected. If the P-value is less than (or equal to) \(\alpha\), then the null hypothesis is rejected in favor of the alternative hypothesis. If the P-value is small, say less than (or equal to) \(\alpha\), then it is "unlikely." And, if the P-value is large, say more than \(\alpha\), then it is "likely." #> alternative hypothesis: true probability of success is not equal to 0.6Ĭreated on by the reprex package (v0.3.The P-value approach involves determining "likely" or "unlikely" by determining the probability - assuming the null hypothesis were true - of observing a more extreme test statistic in the direction of the alternative hypothesis than the one observed. #> number of successes = 3, number of trials = 15, p-value = 0.002398 If the observed value was 3, we immediately see that we must also include $P(Y = 15)$: null_distribution 1 2 3 4 16 # these are the probabilities corresponding to Y=0,1,2ĭbinom(x = 0:15, size = 15, p = 0.6)

2 SIDED HYPOTHESIS TEST CALCULATOR CODE

The following R code provides this calculation: null_distribution 1 2 3 More generally, though, you should add all probabilities precisely for those values of Y with probabilities (under the null hypothesis) not exceeding the probability that Y = 2. This is because the observed value of 2 is really small. It just so happens in this example that looking at one tail gives the correct p-value. With a two-sided test, you should always look at both tails of the distribution. 'if more extreme than op, add it into the sumĪ_Dist(i, trials, P, False) While i -1 'cycle through all possible values Function binom_test_tt(hits As Integer, trials As Integer, P As Double) Then, to find the extremes at each tail it cycles through all possible hits in trials and when it finds a probability (nP) that is more extreme (i.e., less than) the oP value in question, it adds it to the probability. First it calculates the probability (oP) of Hits out of trials with P probability. It implements the solution outlined by a-statistician above. why not learn S, or pay the licensing fees for SPSS?Įdit* This code below is Visual Basic for Applications which you would run in Developer mode in Excel. Someday, someone somewhere will go to the trouble of developing and carefully-validating and publishing a Visual Basic routine. You can hack your way through it for particular cases such as the one in your diagram. I'm confident a_statistician got it right, with Pr(Y=15) = 16)Īs pointed out in Two-sided binomial test in Excel, the Clopper-Pearson 2-sided binomial test isn't something you'd want to perform in Excel.

But the event Y=15 seems (from your picture) to be less likely than the event Y=2, so you might include it in your second-approximation to the p-value calculation (which should sum the probability of your observed event and of all "more extreme" events):įor your third (and final) approximation, you'd check the probability density at Y=15. Indeed the event Y=14 is also more likely than the event Y=2. You can get a first approximation to the Clopper-Pearson interval by assuming the distribution is symmetric, in which case the events Y = 15-2 = 13, and your approximate p-value isĪ glance at your picture reveals that symmetry wasn't a good assumption, as the event Y = 13 is quite a bit more likely than the event Y = 2.